8.4. Working with Problem Components¶

This section introduces the core set of problem types that course teams can add to any course by using the problem component. It also describes editing options and settings for problem components.

For information about specific problem types, and the other exercises and tools that you can add to your course, see Problems, Exercises, and Tools.

8.4.1. Adding a Problem¶

To add interactive problems to a course in Studio, in the course outline, at the unit level, you select Problem. You then choose the type of problem that you want to add from the Common Problem Types list or the Advanced list.

The common problem types include relatively straightforward CAPA problems such as multiple choice and text or numeric input. The advanced problem types can be more complex to set up, such as math expression input, open response assessment, or custom JavaScript problems.

The common and advanced problem types that the problem component lists are the core set of problems that every course team can include in a course. You can also enable more exercises and tools for use in your course. For more information, see Enabling Additional Exercises and Tools.

8.4.1.1. Adding Graded or Ungraded Problems¶

When you establish the grading policy for your course, you define the assignment types that count toward learners’ grades: for example, homework, labs, midterm, final, and participation. You specify one of these assignment types for each of the subsections in your course.

As you develop your course, you can add problem components to a unit in any subsection. The problem components that you add automatically inherit the assignment type that is defined at the subsection level. For example, all of the problem components that you add to a unit in the midterm subsection are graded.

For more information, see Set the Assignment Type and Due Date for a Subsection.

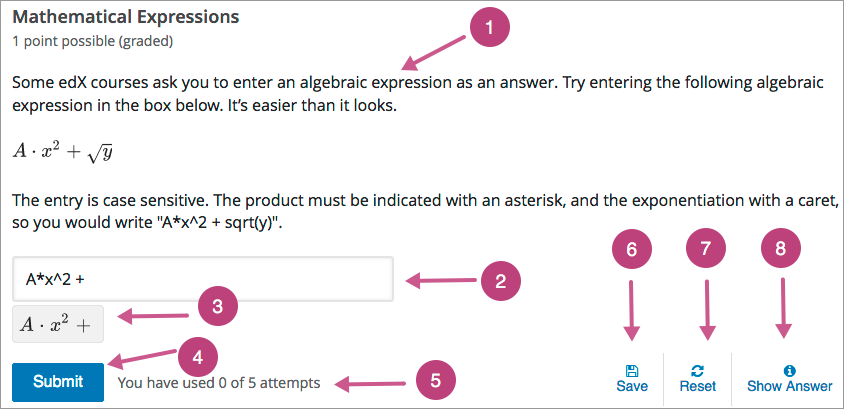

8.4.2. The Learner View of a Problem¶

All problems on the edX platform have these component parts, some of which can be configured. For configurable options, you can specify whether and when an option is available in problems.

Problem text. The problem text can contain any standard HTML formatting.

Within the problem text for each problem component, you must identify a question or prompt, which is, specifically, the question that learners need to answer. This question or prompt also serves as the required accessible label, and is used by screen readers, reports, and Insights. For more information about identifying the question text in your problem, see The Simple Editor.

Response field. Learners enter answers in response fields. The appearance of the response field depends on the type of the problem.

Rendered answer. For some problem types, the LMS uses MathJax to render plain text as “beautiful math.”

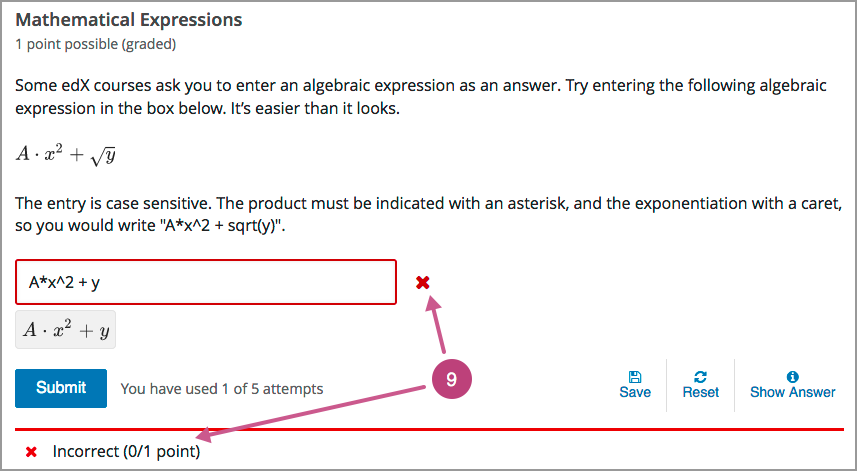

Submit. When a learner selects Submit to submit a response for a problem, the LMS saves the grade and current state of the problem. The LMS immediately provides feedback about whether the response is correct or incorrect, as well as the problem score. The Submit option remains available if the learner has unused attempts remaining, so that she can try to answer the problem again.

Note

If you want to temporarily or permanently hide learners’ results for the problem, see Set Problem Results Visibility.

Attempts. You can set a specific number of attempts or allow unlimited attempts for a problem. By default, the course-wide Maximum Attempts advanced setting is null, meaning that the maximum number of attempts for problems is unlimited.

In courses where a specific number has been specified for Maximum Attempts in Advanced Settings, if you do not specify a value for Maximum Attempts for an individual problem, the number of attempts for that problem defaults to the number of attempts defined in Advanced Settings.

Save. The learner can select Save to save his current response without submitting it for grading. This allows the learner to stop working on a problem and come back to it later.

Reset. You can specify whether the Reset option is available for a problem. This setting at the problem level overrides the default setting for the course in Advanced Settings.

If the Reset option is available, learners can select Reset to clear any input that has not yet been submitted, and try again to answer the question.

If the learner has already submitted an answer, selecting Reset clears the submission and, if the problem includes a Python script to randomize variables and the randomization setting is On Reset, changes the values the learner sees in the problem.

If the problem has already been answered correctly, Reset is not available.

If the number of Maximum Attempts that was set for this problem has been reached, Reset is not available.

Show Answer. You can specify whether this option is available for a problem. If a learner selects Show Answer, the learner sees both the correct answer and the explanation, if any.

If you specify a number in Show Answer: Number of Attempts, the learner must submit at least that number of attempted answers before the Show Answer option is available for the problem.

Feedback. After a learner selects Submit, an icon appears beside each response field or selection within a problem. A green check mark indicates that the response was correct, a green asterisk (*) indicates that the response was partially correct, and a red X indicates that the response was incorrect. Underneath the problem, feedback text indicates whether the problem was answered correctly, incorrectly, or partially correctly, and shows the problem score.

Note

If you want to temporarily or permanently hide learners’ results for the problem, see Set Problem Results Visibility.

In addition to the items above, which are shown in the example, problems also have the following elements.

Correct answer. Most problems require that you specify a single correct answer.

Note

If you want to temporarily or permanently hide learners’ results for the problem, see Set Problem Results Visibility.

Explanation. You can include an explanation that appears when a learner selects Show Answer.

Grading. You can specify whether a group of problems is graded.

Due date. The date that the problem is due. Learners cannot submit answers for problems whose due dates have passed, although they can select Show Answer to show the correct answer and the explanation, if any.

Note

Problems can be open or closed. Closed problems, such as problems whose due dates are in the past, do not accept further responses and cannot be reset. Learners can still see questions, solutions, and revealed explanations, but they cannot submit responses or reset problems.

There are also some attributes of problems that are not immediately visible. You can set these attributes in Studio.

Accessible Label. Within the problem text, you can identify the text that is, specifically, the question that learners need to answer. The text that is labeled as the question is used by screen readers, reports, and Insights. For more information, see The Simple Editor.

Randomization. In certain types of problems, you can include a Python script to randomize the values that are presented to learners. You use this setting to define when values are randomized. For more information, see Randomization.

Weight. Different problems in a particular problem set can be given different weights. For more information, see Problem Weight.

8.4.3. Editing a Problem in Studio¶

When you select Problem and choose one of the problem types, Studio adds an example problem of that type to the unit. To replace the example with your own problem, you select Edit to open the example problem in an editor.

The editing interface that opens depends on the type of problem you choose.

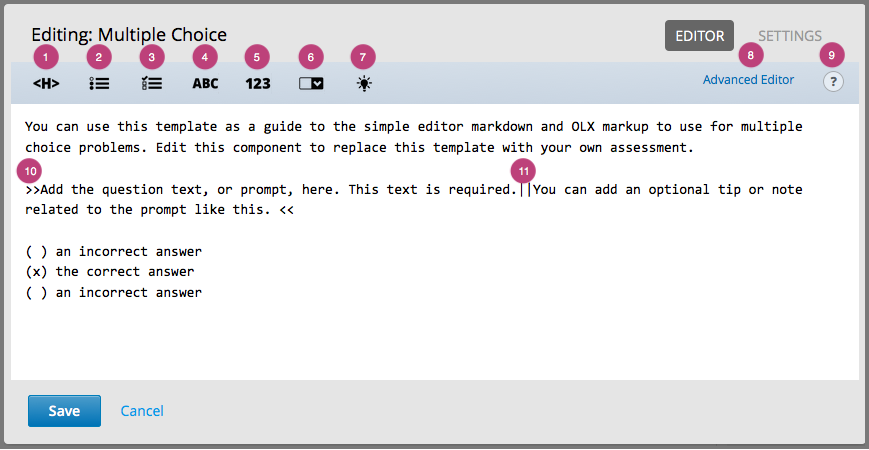

For common problem types, the simple editor opens. In this editor, you use Markdown-style formatting indicators to identify the elements of the problem, such as the prompt and the correct and incorrect answer options.

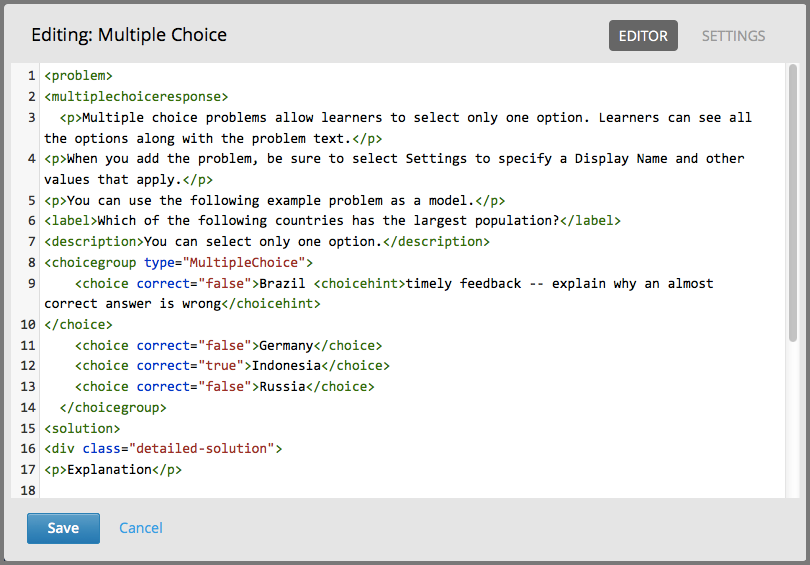

For advanced problem types (with the exception of open response assessment), the advanced editor opens. In this editor you use open learning XML (OLX) elements and attributes to identify the elements of the problem.

For open response assessment problem types, you define the problem elements and options by using a graphical user interface. For more information, see Create an Open Response Assessment Assignment.

You can switch from the simple editor to the advanced editor at any time by selecting Advanced Editor from the simple editor’s toolbar.

Note

After you save a problem in the advanced editor, you cannot open it again in the simple editor.

8.4.3.1. The Simple Editor¶

When you edit one of the common problem types, the simple editor opens with a template that you can use as a guideline for adding Markdown formatting. The following templates are available.

Checkbox Problem and Checkboxes with Hints and Feedback

Dropdown Problem and Dropdown with Hints and Feedback

Multiple Choice Problem and Multiple Choice with Hints and Feedback

Numerical Input Problem and Numerical Input with Hints and Feedback

Text Input Problem and Text Input with Hints and Feedback

Blank common problems also open in the simple editor but they do not provide a template.

8.4.3.1.1. Adding Markdown Formatting¶

The following image shows the multiple choice template in the simple editor.

The simple editor includes a toolbar with options that provide the required Markdown formatting for different types of problems. When you select an option from the toolbar, formatted sample text appears in the simple editor. Alternatively, you can apply formatting to your own text by selecting the text and then one of the toolbar options.

Descriptions of the Markdown formatting that you use in the simple editor follow.

Heading: Identifies a title or heading by adding a series of equals signs (

=) below it on the next line.Multiple Choice: Identifies an answer option for a multiple choice problem by adding a pair of parentheses (

( )) before it. To identify the correct answer option, you insert anxwithin the parentheses: ((x)).Checkboxes: Identifies an answer option for a checkboxes problem by adding a pair of brackets (

[ ]) before it. To identify the correct answer option or options, you insert anxwithin the brackets: ([x]).Text Input: Identifies the correct answer for a text input problem by adding an equals sign (

=) before the answer value on the same line.Numerical Input: Identifies the correct answer for a numerical input problem by adding an equals sign (

=) before the answer value on the same line.Dropdown: Identifies a comma-separated list of values as the set of answer options for a dropdown problem by adding two pairs of brackets (

[[ ]]) around the list. To identify the correct answer option, you add parentheses (( )) around that option.Explanation: Identifies the explanation for the correct answer by adding an

[explanation]tag to the lines before and after the text. The explanation appears only after learners select Show Answer. You define when the Show Answer option is available to learners by using the Show Answer setting.Advanced Editor link: Opens the problem in the advanced editor, which shows the OLX markup for the problem.

Toggle Cheatsheet: Opens a list of formatting hints.

Question or Prompt: Identifies the question that learners need to answer. The toolbar does not have an option that provides this formatting, so you add two pairs of inward-pointing angle brackets (

>> <<) around the question text. For example,>>Is this the question?<<.You must identify a question or prompt in every problem component. In problems that include multiple questions, you must identify each one.

The Student Answer Distribution report uses the text with this formatting to identify each problem.

Insights also uses the text with this formatting to identify each problem. For more information, see Using edX Insights.

Description: Identifies optional guidance that helps learners answer the question. For example, when you add a checkbox problem that is only correct when learners select three of the answer options, you might include the description, “Be sure to select all that apply.” The toolbar does not have an option that provides this formatting, so you add it after the question within the angle brackets, and then you separate the question and the description by inserting a pair of pipe symbols (

||) between them. For example,>>Which of the following choices is correct? ||Be sure to select all that apply.<<.

8.4.3.1.2. Adding Text, Symbols, and Mathematics¶

You can also add text, without formatting, to a problem. Note that screen readers read all of the text that you supply for the problem, and then repeat the text that is identified as the question or prompt immediately before reading the answer choices for the problem. For problems that require descriptions or other text, you might consider adding an HTML component for the text immediately before the problem component.

When you enter unformatted text, note that the simple editor cannot interpret certain symbol characters correctly. These symbols are reserved HTML characters: greater than (>), less than (<), and ampersand (&). If you enter text that includes these characters, the simple editor cannot save your edits. To resolve this problem, replace these characters in your problem text with the HTML entities that represent them.

To enter >, type

>.To enter <, type

<.To enter &, type

&.

To add mathematics, you can use LaTeX, MathML, or AsciiMath notation. Studio uses MathJax to render equations. For more information, see Using MathJax for Mathematics.

8.4.3.2. The Advanced Editor¶

When you edit one of the advanced problem types, the advanced editor opens with an example problem. The advanced editor is an XML editor that shows the OLX markup for a problem. You edit the following advanced problem types in the advanced editor.

Drag and Drop (Deprecated)

Problem with Adaptive Hint and Problem with Adaptive Hint in LaTex

For the Open Response Assessment advanced problem type, a dialog box opens for problem setup.

Blank advanced problems do not provide an example problem, but they also open in the advanced editor by default.

The following image shows the OLX markup in the advanced editor for the same example multiple choice problem that is shown in the simple editor above.

For more information about the OLX markup to use for a problem, see the topic that describes that problem type.

8.4.4. Defining Settings for Problem Components¶

In addition to the text of the problem and its Markdown formatting or OLX markup, you define the following settings for problem components. To access these settings, you edit the problem and then select Settings.

If you do not edit these settings, default values are supplied for your problems.

Note

If you want to temporarily or permanently hide problem results from learners, you use the subsection-level Results Visibility setting. You cannot change the visibility of individual problems. For more information, see Set Problem Results Visibility.

8.4.4.1. Display Name¶

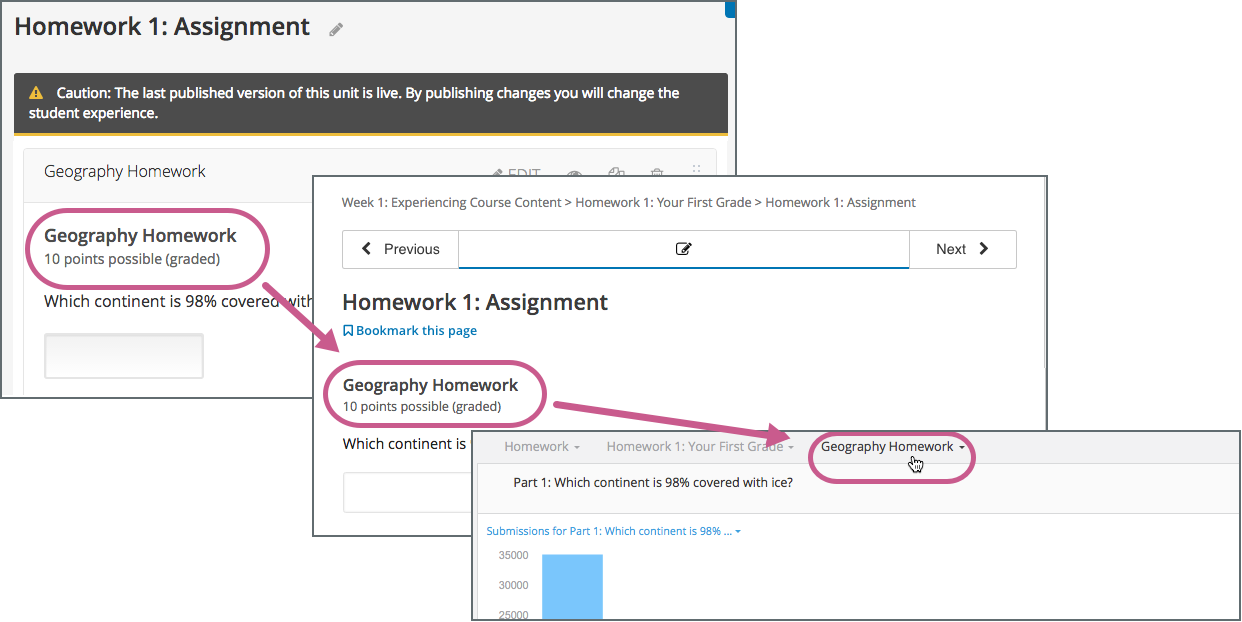

This required setting provides an identifying name for the problem. The display name appears as a heading above the problem in the LMS, and it identifies the problem for you in Insights. Be sure to add unique, descriptive display names so that you, and your learners, can identify specific problems quickly and accurately.

The following illustration shows the display name of a problem in Studio, in the LMS, and in Insights.

For more information about metrics for your course’s problem components, see Using edX Insights.

8.4.4.2. Maximum Attempts¶

This setting specifies the number of times that a learner is allowed to try to answer this problem correctly. You can define a different Maximum Attempts value for each problem.

A course-wide Maximum Attempts setting defines the default value for this problem-specific setting. Initially, the value for the course-wide setting is null, meaning that learners can try to answer problems an unlimited number of times. You can change the course-wide default by selecting Settings and then Advanced Settings. Note that if you change the course-wide default from null to a specific number, you can no longer change the problem-specific Maximum Attempts value to unlimited.

Only problems that have a Maximum Attempts setting of 1 or higher are included in the answer distribution computations used in edX Insights and the Student Answer Distribution report.

Note

EdX recommends setting Maximum Attempts to unlimited or a large number when possible. Problems that allow unlimited attempts encourage risk taking and experimentation, both of which lead to improved learning outcomes. However, allowing for unlimited attempts might not be feasible in some courses, such as those that use primarily multiple choice or dropdown problems in graded subsections.

8.4.4.3. Problem Weight¶

Note

The LMS scores all problems. However, only scores for problem components that are in graded subsections count toward a learner’s final grade.

This setting specifies the total number of points possible for the problem. In the LMS, the problem weight appears near the problem’s display name.

By default, each response field, or answer space, in a problem component is worth one point. You increase or decrease the number of points for a problem component by setting its Problem Weight.

In the example shown above, a single problem component includes three separate questions. To respond to these questions, learners select answer options from three separate dropdown lists, the response fields for this problem. By default, learners receive one point for each question that they answer correctly.

For information about how to define a problem that includes more than one question, see Including Multiple Questions in One Component.

8.4.4.3.1. Computing Scores¶

The score that a learner earns for a problem is the result of the following formula.

Score = Weight × (Correct answers / Response fields)

Score is the point score that the learner receives.

Weight is the problem’s maximum possible point score.

Correct answers is the number of response fields that contain correct answers.

Response fields is the total number of response fields in the problem.

Examples

The following are some examples of computing scores.

Example 1

A problem’s Problem Weight setting is left blank. The problem has two response fields. Because the problem has two response fields, the maximum score is 2.0 points.

If one response field contains a correct answer and the other response field contains an incorrect answer, the learner’s score is 1.0 out of 2 points.

Example 2

A problem’s weight is set to 12. The problem has three response fields.

If a learner’s response includes two correct answers and one incorrect answer, the learner’s score is 8.0 out of 12 points.

Example 3

A problem’s weight is set to 2. The problem has four response fields.

If a learner’s response contains one correct answer and three incorrect answers, the learner’s score is 0.5 out of 2 points.

8.4.4.4. Randomization¶

Note

This Randomization setting serves a different purpose from “problem randomization”. This Randomization setting affects how numeric values are randomized within a single problem and requires the inclusion of a Python script. Problem randomization presents different problems or problem versions to different learners. For more information, see Problem Randomization.

For problems that include a Python script to generate numbers randomly, this setting specifies how frequently the values in the problem change: each time a different learner accesses the problem, each time a single learner tries to answer the problem, both, or never.

Note

This setting should only be set to an option other than Never for problems that are configured to do random number generation.

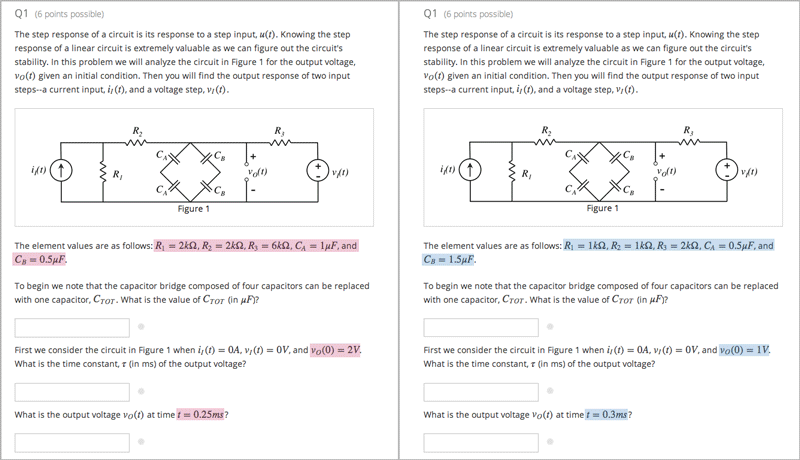

For example, in this problem, the highlighted values change each time a learner submits an answer to the problem.

If you want to randomize numeric values in a problem, you complete both of these steps.

Make sure that you edit your problem to include a Python script that randomly generates numbers.

Select an option other than Never for the Randomization setting.

The edX Platform has a 20-seed maximum for randomization. This means that learners see up to 20 different problem variants for every problem that has Randomization set to an option other than Never. It also means that every answer for the 20 different variants is reported by the Answer Distribution report and edX Insights. Limiting the number of variants to a maximum of 20 allows for better analysis of learner submissions by allowing you to detect common incorrect answers and usage patterns for such answers.

For more information, see Student Answer Distribution in this guide, or Review Answers to Graded Problems or Review Answers to Ungraded Problems in Using edX Insights.

Important

Whenever you choose an option other than Never for a problem, the computations for the Answer Distribution report and edX Insights include up to 20 variants for the problem, even if the problem was not actually configured to include randomly generated values. This can make data collected for problems that cannot include randomly generated values, (including, but not limited to, all multiple choice, checkboxes, dropdown, and text input problems), extremely difficult to interpret.

You can choose the following options for the Randomization setting.

Option |

Description |

|---|---|

Always |

Learners see a different version of the problem each time they select Submit. |

On Reset |

Learners see a different version of the problem each time they select Reset. |

Never |

All learners see the same version of the problem. For most courses, this option is supplied by default. Select this option for every problem in your course that does not include a Python script to generate random numbers. |

Per Student |

Individual learners see the same version of the problem each time they look at it, but that version is different from the version that other learners see. |

8.4.4.5. Show Answer¶

This setting adds a Show Answer option to the problem. The following options define when the answer is shown to learners.

After All Attempts |

Learners will be able to Show Answer after they have used all of their attempts. Requires max attempts to be set on the problem. |

After All Attempts or Correct |

Learners will be able to Show Answer after they have used all of their attempts or have correctly answered the question. If max attempts are not set, the learner will need to answer correctly before they can Show Answer. |

After Some Number of Attempts |

Learners will be able to Show Answer after they have attempted the problem a minimum number of times (this value is set by the course team in Studio). |

Always |

Always present the Show Answer option. Note: If you specify Always, learners can submit a response even after they select Show Answer to see the correct answer. |

Answered |

Learners will be able to Show Answer after they have correctly answered the problem. |

Attempted |

Learners will be able to Show Answer after they have made at least 1 attempt on the problem. If the problem can be, and is, reset, the answer continues to show. (When a learner answers a problem, the problem is considered to be both attempted and answered. When the problem is reset, the problem is still considered to have been attempted, but is not considered to be answered.) |

Attempted or Past Due |

Learners will be able to Show Answer after they have made at least 1 attempt on the problem or the problem’s due date is in the past. |

Closed |

Learners will be able to Show Answer after they have used all attempts on the problem or the due date for the problem is in the past. |

Correct or Past Due |

Learners will be able to Show Answer after they have correctly answered the problem or the due date for the problem is in the past. |

Finished |

Learners will be able to Show Answer after they have used all attempts on the problem or the due date for the problem is in the past or they have correctly answered the problem. |

Never |

Learners and Staff will never be able to Show Answer. |

Past Due |

Learners will be able to Show Answer after the due date for the problem is in the past. |

8.4.4.6. Show Answer: Number of Attempts¶

This setting limits when learners can select the Show Answer option for a problem. Learners must submit at least the specified number of attempted answers for the problem before the Show Answer option is available to them.

8.4.4.7. Show Reset Button¶

This setting defines whether a Reset option is available for the problem.

Learners can select Reset to clear any input that has not yet been submitted, and try again to answer the problem.

If the learner has already submitted an answer, selecting Reset clears the submission and, if the problem contains randomized variables and randomization is set to On Reset, changes the values in the problem.

If the number of Maximum Attempts that was set for this problem has been reached, the Reset option is not visible.

This problem-level setting overrides the course-level Show Reset Button for Problems advanced setting.

8.4.4.8. Timer Between Attempts¶

This setting specifies the number of seconds that a learner must wait between submissions for a problem that allows multiple attempts. If the value is 0, the learner can attempt the problem again immediately after an incorrect attempt.

Adding required wait time between attempts can help to prevent learners from simply guessing when multiple attempts are allowed.

If a learner attempts a problem again before the required time has elapsed, she sees a message below the problem indicating the remaining wait time. The format of the message is, “You must wait at least {n} seconds between submissions. {n} seconds remaining.”

8.4.5. Including Multiple Questions in One Component¶

In some cases, you might want to design an assessment that combines multiple questions in a single problem component. For example, you might want learners to demonstrate mastery of a concept by providing the correct responses to several questions, and only giving them credit for problem if all of the answers are correct.

Another example involves learners who have slow or intermittent internet connections. When every problem appears on a separately loaded web page, these learners can find the amount of time it takes to complete an assignment or exam discouraging. For these learners, grouping several questions together can promote increased engagement with course assignments.

When you add multiple questions to a single problem component, the settings that you define, including the display name and whether to show the Reset button, apply to all of the questions in that component. The answers to all of the questions are submitted when learners select Submit, and the correct answers for all of the questions appear when learners select Show Answer. By default, learners receive one point for each question they answer correctly. For more information about changing the default problem weight and other settings, see Defining Settings for Problem Components.

Important

To assure that the data collected for learner interactions with your problem components is complete and accurate, include a maximum of 10 questions in a single problem component.

8.4.5.1. Adding Multiple Questions to a Problem Component¶

To design an assignment that includes several questions, you add one problem

component and then edit it to add every question and its answer options, one

after the other, in that component. Be sure to identify the text of every

question or prompt with the appropriate Markdown formatting (>> <<) or OLX

<label> element, and include all of the other required elements

for each question.

In the simple editor, you use three hyphen characters (

---) on a new line to separate one question and its answer options from the next.In the advanced editor, each question and its answer options are enclosed by the element that identifies the type of problem, such as

<multiplechoiceresponse>for a multiple choice question or<formularesponse>for a math expression input question.You can provide a different explanation for each question with the appropriate Markdown formatting (

[explanation]) or OLX<solution>element.

As a best practice, edX recommends that you avoid including unformatted paragraph text between the questions. Screen readers can skip over text that is inserted among multiple questions.

The questions that you include can all be of the same problem type, such as a series of text input questions, or you can include questions that use different problem types, such as both numerical input and math expression input.

Note

You cannot use a Custom JavaScript Display and Grading Problem in a problem component that contains more than one question. Each custom JavaScript problem must be in its own component.

An example of a problem component that includes a text input question and a numerical input question follows. In the simple editor, the problem has the following Markdown formatting.

>>Who invented the Caesar salad?||Be sure to check your spelling.<<

= Caesar Cardini

[explanation]

Caesar Cardini is credited with inventing this salad and received a U.S. trademark for his salad dressing recipe.

[explanation]

---

>>In what year?<<

= 1924

[explanation]

Cardini invented the dish at his restaurant on 4 July 1924 after the rush of holiday business left the kitchen with fewer supplies than usual.

[explanation]

That is, you include three hyphen characters (---) on a new line to

separate the problems.

In the advanced editor, the problem has the following OLX markup.

<problem>

<stringresponse answer="Caesar Cardini" type="ci">

<label>Who invented the Caesar salad?</label>

<description>Be sure to check your spelling.</description>

<textline size="20"/>

<solution>

<div class="detailed-solution">

<p>Explanation</p>

<p>Caesar Cardini is credited with inventing this salad and received

a U.S. trademark for his salad dressing recipe.</p>

</div>

</solution>

</stringresponse>

<numericalresponse answer="1924">

<label>In what year?</label>

<formulaequationinput/>

<solution>

<div class="detailed-solution">

<p>Explanation</p>

<p>Cardini invented the dish at his restaurant on 4 July 1924 after

the rush of holiday business left the kitchen with fewer supplies

than usual.</p>

</div>

</solution>

</numericalresponse>

</problem>

8.4.6. Adding Feedback and Hints to a Problem¶

You can add feedback, hints, or both to the following core problem types.

By using hints and feedback, you can provide learners with guidance and help as they work on problems.

8.4.6.1. Feedback in Response to Attempted Answers¶

You can add feedback that displays to learners after they submit an answer.

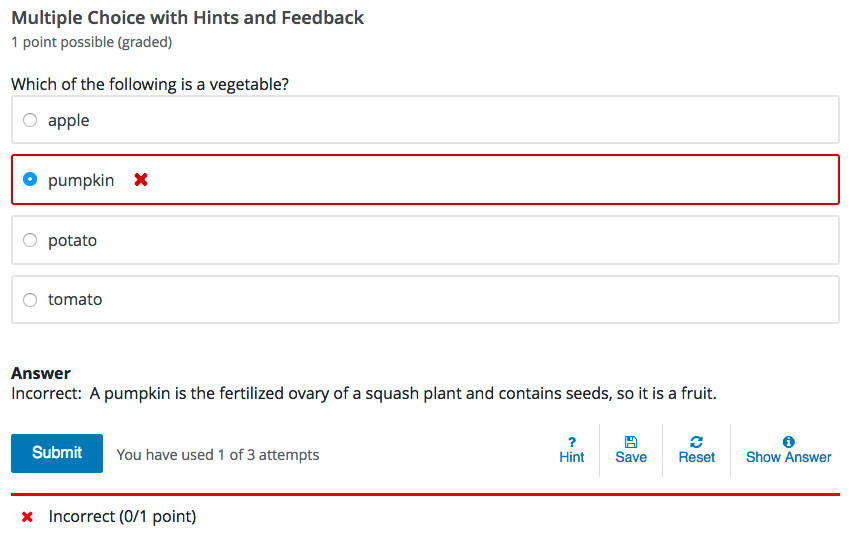

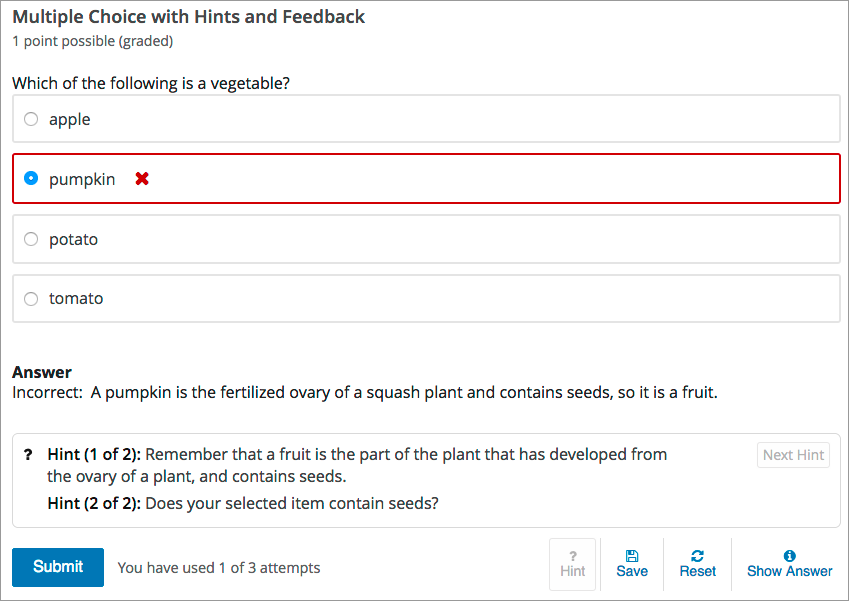

For example, the following multiple choice problem provides feedback in response to the selected option when the learner selects Submit. In this case, feedback is given for an incorrect answer.

8.4.6.2. Best Practices for Providing Feedback¶

The immediacy of the feedback available to learners is a key advantage of online instruction and difficult to do in a traditional classroom environment.

You can target feedback for common incorrect answers to the misconceptions that are common for the level of the learner (for example, elementary, middle, high school, college).

In addition, you can create feedback that provides some guidance to the learner about how to arrive at the correct answer. This is especially important in text input and numeric input problems, because without such guidance, learners might not be able to proceed.

You should also include feedback for the correct answer to reinforce why the answer is correct. Especially in questions where learners are able to guess, such as multiple choice and dropdown problems, the feedback should provide a reason why the selection is correct.

8.4.6.3. Providing Hints for Problems¶

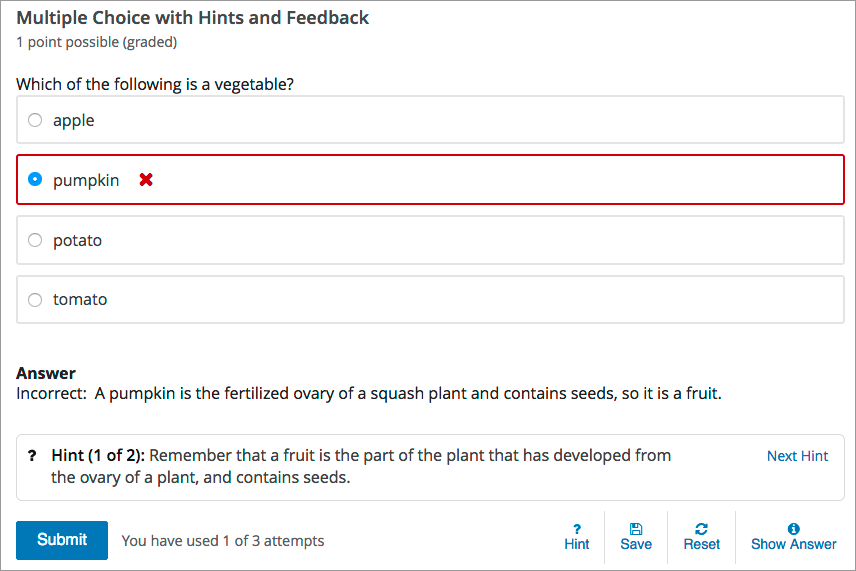

You can add one or more hints that are displayed to learners. When you add hints, the Hint button is automatically displayed to learners. Learners can access the hints by selecting Hint beneath the problem. A learner can view multiple hints by selecting Hint multiple times.

For example, in the following multiple choice problem, the learner selects Hint after having made one incorrect attempt.

The hint text indicates that it is the first of two hints. After the learner selects Next Hint, both of the available hints appear. When all hints have been used, the Hint or Next Hint option is no longer available.

8.4.6.4. Best Practices for Providing Hints¶

To ensure that your hints can assist learners with varying backgrounds and levels of understanding, you should provide multiple hints with different levels of detail.

For example, the first hint can orient the learner to the problem and help those struggling to better understand what is being asked.

The second hint can then take the learner further towards the answer.

In problems that are not graded, the third and final hint can explain the solution for learners who are still confused.

8.4.6.5. Create Problems with Feedback and Hints¶

You create problems with feedback and hints in Studio. Templates with feedback and hints configured are available to make creating your own problems easier.

While editing a unit, in the Add New Component panel, select Problem. In the list that opens, select Common Problem Types. Templates for problems with feedback and hints are listed.

Add the problem type you need to the unit, then edit the component. The exact syntax you use to configure hints and feedback depends on the problem type. See the topic for the problem type for more information.

8.4.7. Awarding Partial Credit for a Problem¶

You can configure the following problem types so that learners can receive partial credit for a problem if they submit an answer that is partly correct.

By awarding partial credit for problems, you can motivate learners who have mastered some of the course content and provide a score that accurately demonstrates their progress.

For more information about configuring partial credit, see the topic for each problem type.

8.4.7.1. How Learners Receive Partial Credit¶

Learners receive partial credit when they submit an answer in the LMS.

In the following example, the course team configured a multiple choice problem to award 25% of the possible points (instead of 0 points) for one of the incorrect answer options. The learner selected this incorrect option, and received 25% of the possible points.

8.4.7.2. Partial Credit and Reporting on Learner Performance¶

When a learner receives partial credit for a problem, the LMS only adds the points that the learner earned to the grade. However, the LMS reports any problem for which a learner receives credit, in full or in part, as correct in the following ways.

Events that the system generates when learners receive partial credit for a problem indicate that the answer was correct. Specifically, the

correctnessfield has a value ofcorrect.For more information about events, see Problem Interaction Events in the EdX Research Guide.

The AnswerValue in the Student Answer Distribution report is 1, for correct.

The edX Insights insights:student performance reports count the answer as correct.

Course teams can see that a learner received partial credit for a problem in

the learner’s submission history. The submission history shows the score that

the learner received out of the total available score, and the value in the

correctness field is partially-correct. For more information, see

Learner Answer Submissions.

8.4.7.3. Adding Tooltips to a Problem¶

To help learners understand terminology or other aspects of a problem, you can add inline tooltips. Tooltips show text to learners when they move their cursors over a tooltip icon.

The following example problem includes two tooltips. The tooltip that provides a definition for “ROI” is being shown.

Note

For learners using a screen reader, the tooltip expands to make its associated text accessible when the screen reader focuses on the tooltip icon.

To add the tooltip, you wrap the text that you want to appear as the tooltip in

the clarification element. For example, the following problem contains two

tooltips.

<problem>

<text>

<p>Given the data in Table 7 <clarification>Table 7: "Example PV

Installation Costs", Page 171 of Roberts textbook</clarification>,

compute the ROI <clarification><strong>ROI</strong>: Return on

Investment</clarification> over 20 years.

</p>

. . .

8.4.8. Problem Randomization¶

Presenting different learners with different problems or with different versions of the same problem is referred to as “problem randomization”.

You can provide different learners with different problems by using randomized content blocks, which randomly draw problems from pools of problems stored in content libraries. For more information, see Randomized Content Blocks.

Note

Problem randomization is different from the Randomization setting that you define in Studio. Problem randomization presents different problems or problem versions to different learners, while the Randomization setting controls when a Python script randomizes the variables within a single problem. For more information about the Randomization setting, see Randomization.

Creating randomized problems by exporting your course and editing some of your course’s XML files is no longer supported.

8.4.9. Modifying a Released Problem¶

Warning

Be careful when you modify problems after they have been released. Changes that you make to published problems can affect the learner experience in the course and analysis of course data.

After a learner submits a response to a problem, the LMS stores that response, the score that the learner received, and the maximum score for the problem. For problems with a Maximum Attempts setting greater than 1, the LMS updates these values each time the learner submits a new response to a problem. However, if you change a problem or its attributes, existing learner information for that problem is not automatically updated.

For example, you release a problem and specify that its answer is 3. After some learner have submitted responses, you notice that the answer should be 2 instead of 3. When you update the problem with the correct answer, the LMS does not update scores for learners who originally answered 2 for the problem and received the wrong score.

For another example, you change the number of response fields to three. Learners who submitted answers before the change have a score of 0, 1, or 2 out of 2.0 for that problem. Learners who submitted answers after the change have scores of 0, 1, 2, or 3 out of 3.0 for the same problem.

If you change the weight setting for the problem in Studio, however, existing scores update when the learner’s Progress page is refreshed. In a live section, learners will see the effect of these changes.

8.4.9.1. Workarounds¶

If you have to modify a released problem in a way that affects grading, you have two options to ensure that every learner has the opportunity to submit a new response and be regraded. Note that both options require you to ask your learners to go back and resubmit answers to a problem.

In the problem component that you changed, increase the number of attempts for the problem, and then ask all of your learners to redo the problem.

Delete the entire problem component in Studio and replace it with a new problem component that has the content and settings that you want. Then ask all of your learners to complete the new problem. (If the revisions you must make are minor, you might want to duplicate the problem component before you delete it, and then revise the copy.)

For information about how to review and adjust learner grades in the LMS, see Adjust Grades for One or All Learners.